Digital vigilantes use AI deepfakes to catch online predators in sting operations.

- Activists use AI deepfakes to pose as underage victims online

- Sting operations led to multiple arrests in 2023 alone

- Police warn of legal and ethical risks of vigilante justice

Digital vigilantes are increasingly turning to artificial intelligence to fight online predators, using deepfake technology to pose as underage victims in sting operations. The tactic has surged in popularity as child exploitation cases rise globally, with activists reporting dozens of arrests linked to their AI-driven stings in 2023 alone.

How AI deepfakes are used in sting operations

Volunteer groups and individual activists create realistic AI-generated profiles of fictional children to engage with predators on platforms like Facebook, Instagram, and Kik. Once a predator initiates contact, the vigilantes record conversations and share evidence with law enforcement. In some cases, the interactions are broadcast live to discourage further predatory behavior.

Law enforcement agencies have acknowledged receiving tips from these groups, though they emphasize that prosecutions rely on evidence collected by trained professionals. “We appreciate any assistance that helps protect children, but we urge caution,” said a spokesperson for Interpol. “Vigilante actions can compromise investigations and may not meet legal standards.”

Risks and ethical dilemmas of AI-driven justice

While activists argue that AI deepfakes level the playing field against tech-savvy predators, critics warn of potential pitfalls. False positives—where innocent individuals are wrongly targeted—remain a concern, particularly when AI-generated profiles are not carefully verified. Legal experts also question whether evidence collected by non-official actors will hold up in court.

A 2023 report by Europol highlighted the risks of “digital vigilantism,” noting that poorly executed stings could alert predators to law enforcement tactics, allowing them to evade capture. The agency recommended that activists work closely with authorities to ensure evidence integrity.

The technology behind the stings

The deepfake tools used in these operations rely on generative AI models trained on vast datasets of real children’s images and voices. Some groups use open-source software like DeepFaceLab, while others purchase commercial products designed for entertainment but repurposed for activism. The quality of these fakes has improved dramatically in recent years, making it harder for predators to distinguish real children from AI-generated personas.

Despite the technological sophistication, ethical questions persist. “At what point does this become entrapment?” asked Dr. Susan McIntyre, a cybersecurity researcher at MIT. “We must balance the fight against predators with the rights of the accused.”

What’s next for AI in law enforcement

Law enforcement agencies are beginning to explore AI tools for their own operations, though adoption remains slow due to concerns over bias and accountability. The FBI has tested AI-driven chatbots to mimic minors in undercover stings, but officials stress that human oversight remains critical.

For now, digital vigilantes continue to fill gaps where law enforcement resources are stretched thin. Advocacy groups like Predator Free America have called for clearer guidelines to ensure these stings remain ethical and effective.

As AI technology advances, the debate over its use in justice will only intensify—raising questions about who gets to play sheriff in the digital age.

What You Need to Know

- Source: France 24

- Published: May 15, 2026 at 15:27 UTC

- Category: World

- Topics: #france24 · #world-news · #europe · #advanced · #digital · #ai-deepfakes-predators

Read the Full Story

This is a curated summary. For the complete article, original data, quotes and full analysis:

All reporting rights belong to the respective author(s) at France 24. GlobalBR News summarizes publicly available content to help readers discover the most relevant global news.

Curated by GlobalBR News · May 15, 2026

Related Articles

- US proposes 40% cut in Colorado River water for 3 states amid drought

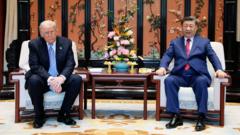

- Trump urges Taiwan to avoid independence amid rising China tensions

- Xi gives Trump private tour of secret garden in Beijing

🇧🇷 Resumo em Português

A internet se tornou um campo minado para criminosos que se escondem atrás de perfis falsos, mas uma nova onda de ativistas digitais está virando o jogo com armas improváveis: deepfakes gerados por IA. Em uma trama digna de seriado de espionagem, grupos de vigilantes ao redor do mundo passaram a usar essa tecnologia não apenas para desmascarar predadores online, mas também para expor redes de pedofilia e assédio virtual, com operações que já resultaram em prisões e remoção de conteúdo ilícito.

No Brasil, onde o número de casos de aliciamento infantil cresceu mais de 300% durante a pandemia, segundo a SaferNet, a aplicação dessas técnicas ganha contornos urgentes. Especialistas alertam, porém, que o uso indiscriminado de deepfakes pode gerar falsas acusações e até violar direitos fundamentais, como o direito à imagem. A polêmica divide opiniões: enquanto defensores enxergam uma ferramenta poderosa contra o crime, críticos cobram regulamentação para evitar abusos, especialmente em um país onde a justiça ainda engatinha para lidar com crimes cibernéticos.

O debate está só começando, e o próximo passo pode definir se o Brasil abraçará essa inovação com cautela ou se deixará brechas para que criminosos continuem impunes.

🇪🇸 Resumen en Español

Un grupo de activistas digitales está revolucionando la lucha contra la explotación infantil en internet gracias a una herramienta inesperada: los deepfakes generados por inteligencia artificial. Con perfiles falsos hiperrealistas, estos vigilantes se infiltran en redes y foros para desenmascarar a depredadores sexuales que actúan bajo el anonimato de la red, desmantelando así sus redes de abuso con pruebas contundentes.

Sin embargo, este método no está exento de polémica. Mientras algunos lo celebran como una forma efectiva de combatir un crimen que prolifera en la oscuridad digital, expertos en privacidad y ética advierten sobre los riesgos de crear contenido falso, incluso con fines justos. Para los hispanohablantes, la cuestión adquiere especial relevancia en un contexto donde países como España y México figuran entre los más afectados por la pornografía infantil en línea, según informes recientes. La disyuntiva entre justicia y legalidad en un terreno aún no regulado plantea un debate que trasciende fronteras y exige una reflexión urgente sobre los límites de la tecnología al servicio de la sociedad.

France 24

Read full article at France 24 →This post is a curated summary. All rights belong to the original author(s) and France 24.

Was this article helpful?

Discussion